If Anyone Builds It, Everyone Dies

Why Superhuman AI Would Kill Us All

2025

2

0

0

Why Superhuman AI Would Kill Us All

2025

2

0

0

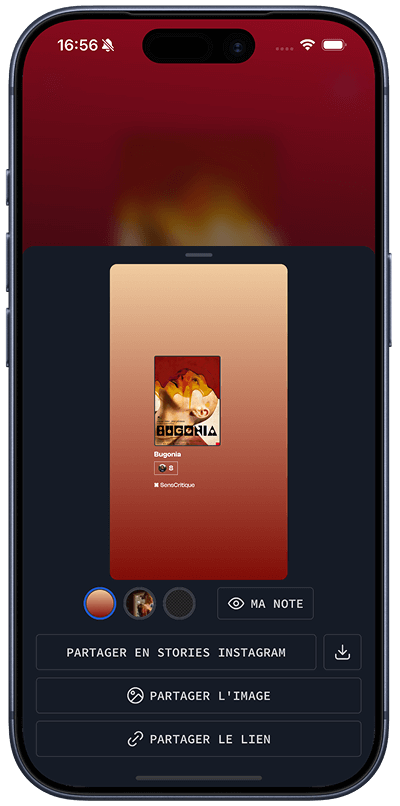

Ma note

Lu

Envie de le lire

En cours

Coup de cœur

Ajouter à une liste

Accès rapide

Description

Activités

Livre de Eliezer Yudkowsky et Nate Soares · 2025 (États-Unis)

Genres : Sciences, Version originaleAI is the greatest threat to our existence that we have ever faced. The scramble to create superhuman AI has put us on the path to extinction – but it’s not too late to change course. Two pioneering researchers in the field, Eliezer Yudkowsky and Nate Soares, explain why artificial superintelligence would be a global suicide bomb and call for an immediate halt to its development. The technology may be complex, but the facts are simple: companies and countries are in a race to build... Voir plus

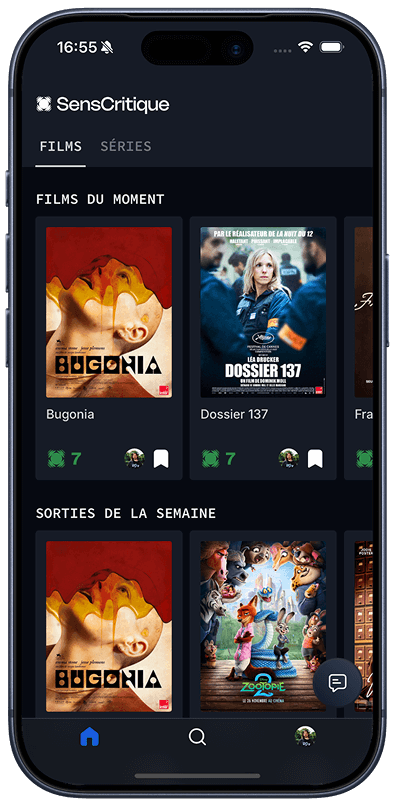

SensCritique dans votre poche.

Téléchargez l’app SensCritique.

Explorez. Vibrez. Partagez.

À proposNotre application mobile Notre extensionAideNous contacterEmploiL'éditoCGUAmazonSOTA

© 2026 SensCritique

Thème